Forget Sora? Why Kandinsky 5.0 Is the Open-Source Reality Check the AI Video World Needed

Let’s address the elephant in the server room: We have all been waiting for the “Sora moment” to actually trickle down to us mere mortals. While OpenAI and Google DeepMind play 4D chess behind closed API doors with Sora and Veo, the open-source community has been grinding away, looking for a way to democratize high-fidelity video generation. And honestly? The wait might just be over.

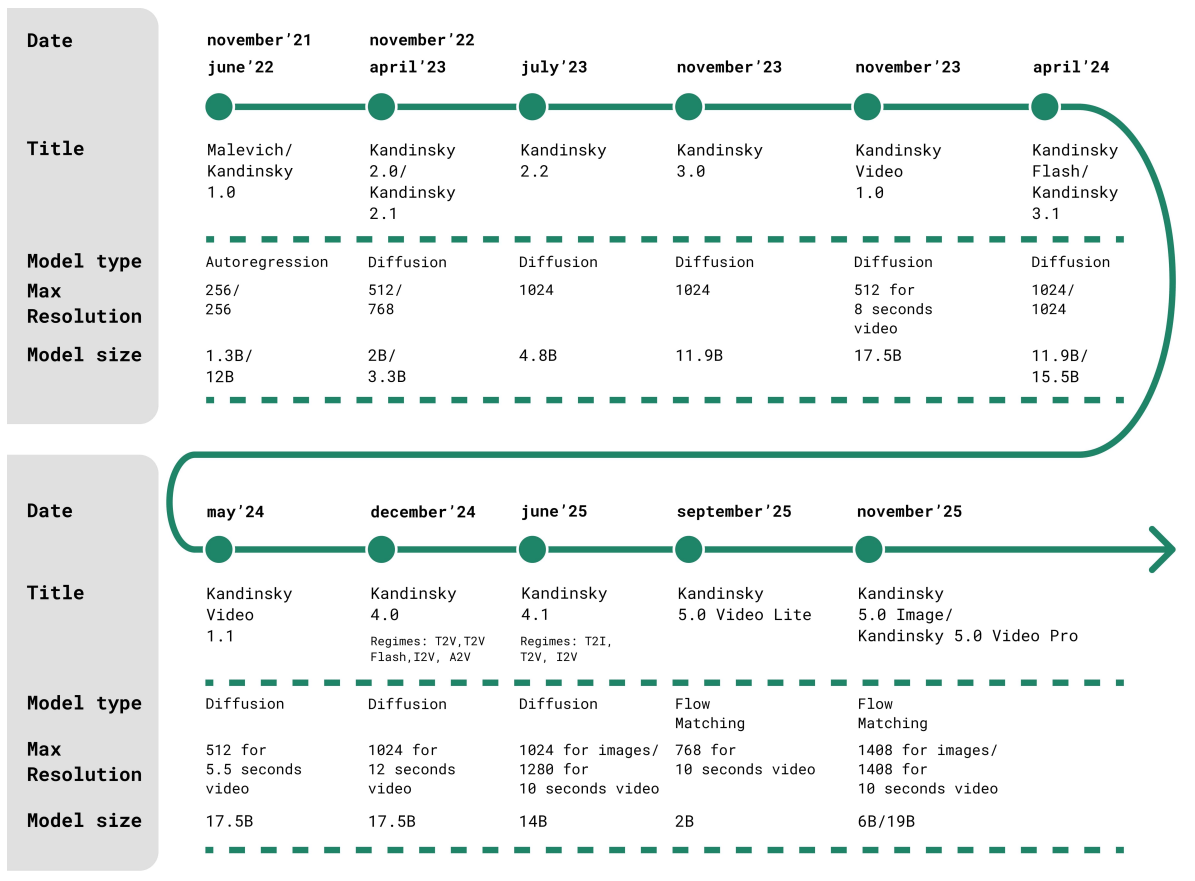

Enter Kandinsky 5.0. If you haven’t been tracking the Kandinsky Lab lineage, now is the time to start paying attention. This isn’t just another incremental update with a slightly better FID score. This is a complete architectural overhaul that effectively throws down the gauntlet to proprietary giants.

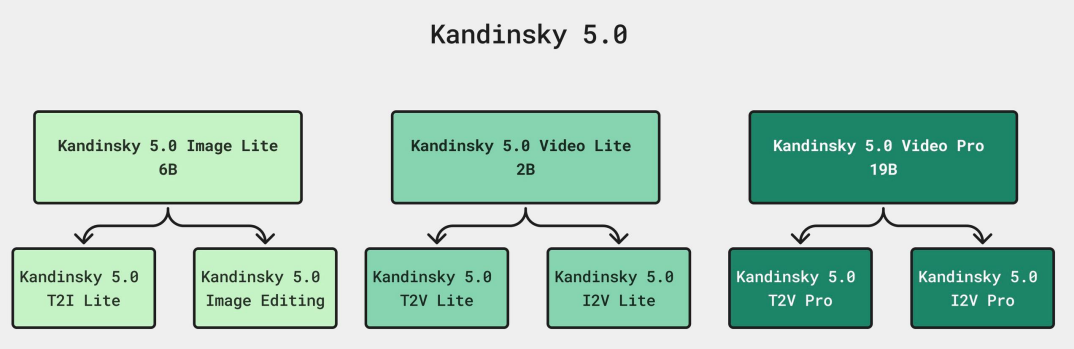

Here is the bottom line: We are looking at a family of models that doesn’t just promise 10-second, high-resolution video generation—it actually delivers it with code and weights you can likely run yourself (if you have the VRAM). From a massive 19-billion parameter “Pro” beast to a nimble 2-billion parameter “Lite” model, Kandinsky 5.0 is making a compelling case that the future of generative media isn’t gated; it’s open.

Driving the news is a shift away from standard diffusion techniques toward Flow Matching and a novel attention mechanism called NABLA that solves one of the biggest headaches in AI video: computational cost. Let’s dive deep into why this release changes the landscape for developers and creators in the US and beyond.

The Triple Threat: One Size Does Not Fit All

In the past, “foundation model” releases usually meant one big, unwieldy file that required an H100 cluster to run. Kandinsky Lab has taken a smarter, more segmented approach here, acknowledging that a hobbyist with a gaming PC and a Hollywood VFX studio have vastly different needs. The 5.0 release is actually a lineup of three distinct powerhouses:

- Kandinsky 5.0 Image Lite (6B): Don’t let the “Lite” fool you. At 6 billion parameters, this is a hefty text-to-image model designed for high aesthetic fidelity and prompt adherence. It’s the bread-and-butter tool for static visuals.

- Kandinsky 5.0 Video Lite (2B): This is arguably the most exciting piece for the average developer. It’s a 2-billion parameter text-to-video and image-to-video model. It’s fast, lightweight, and optimized for speed without turning your output into a glitchy mess.

- Kandinsky 5.0 Video Pro (19B): The heavyweight champion. With 19 billion parameters, this model is gunning for state-of-the-art (SOTA) quality, capable of generating 10-second clips at resolutions pushing 1408 pixels. This is where the comparison to Veo and Sora becomes legitimate.

“The segmentation here is smart. By separating the ‘Lite’ swiftness from the ‘Pro’ fidelity, Kandinsky isn’t just releasing a model; they are releasing a production pipeline.”

Under the Hood: Flow Matching and the NABLA Breakthrough

To understand why Kandinsky 5.0 is a big deal, we have to get a little technical—but stick with me, because this is where the magic happens. The team ditched the older architectures for a Cross-Attention Diffusion Transformer (CrossDiT) built on the Flow Matching paradigm. In plain English? It’s a more efficient way to teach the AI how to turn noise into crystal-clear pixels.

But the real kicker—the secret sauce that makes generating 10 seconds of HD video possible without burning down a data center—is something they call NABLA (Neighborhood Adaptive Block-Level Attention).

Why NABLA Matters

Video generation is mathematically expensive. Standard attention mechanisms (the way the AI “pays attention” to different parts of a video) scale quadratically. That means if you double the video length, the computational cost doesn’t just double; it explodes. This is why most open-source models tap out at 2 to 4 seconds.

NABLA changes the calculus. It uses a sparse attention mechanism that dynamically figures out which parts of the video frame actually need attention and which can be ignored. According to their technical report, this results in a 2.7x reduction in training and inference time while maintaining 90% sparsity. That is a massive efficiency gain. It allows the Video Pro model to handle sequences longer than 5 seconds without the quality degrading into a blur of artifacts.

The Data “Soup”: A Masterclass in Curation

We often say in SEO and AI: “Garbage in, garbage out.” The Kandinsky team seems to have taken this to heart with a borderline obsessive data curation pipeline. They didn’t just scrape the internet and hit “train.” They employed a sophisticated multi-stage process involving aesthetic scoring, watermark detection (crucial for avoiding legal headaches), and synthetic captioning using massive Vision Language Models (VLMs) like Qwen2.5-VL.

But here is the fascinating part regarding their Supervised Fine-Tuning (SFT). They used a “model soup” approach. Instead of training one model on everything, they:

- Clustered their data into 9 specific domains (Nature, Architecture, Food, People, etc.).

- Fine-tuned separate models for each domain.

- Averaged the weights of these models into a final “soup.”

This technique, often used in LLMs but less common in video, ensures the model is a jack of all trades and a master of many. It prevents the model from forgetting how to draw a cat just because it learned how to draw a skyscraper. Furthermore, for the image generation model, they implemented Reinforcement Learning (RL)-based post-training. This is the same alignment magic that makes ChatGPT sound human, but applied here to make images look more photorealistic and adhere better to prompts.

Context: The Open Source Counter-Punch

Let’s zoom out a bit. The AI video space in 2024 and 2025 has been defined by a “look but don’t touch” mentality from the big US labs. Sora dazzled us, but remains unreleased to the public. Google’s Veo is impressive but gated. This created a vacuum that Chinese labs (like the teams behind Wan and Hunyuan) and decentralized collectives have tried to fill.

Kandinsky has a history here. From their early diffusion models (2.1, 2.2) to the flashier 3.0, they have consistently pushed the envelope. However, 5.0 represents a shift from “catching up” to “leading the pack.”

The Comparison Game

In side-by-side human evaluations (the gold standard for AI testing), Kandinsky 5.0 Video Pro holds its own. Against Google’s Veo 3, the Kandinsky Pro model actually scored higher in “Visual Quality” and “Motion Dynamics,” although Veo still edges it out in strict prompt following (complex instruction adherence). Against the Wan 2.1 models, Kandinsky 5.0 Video Lite showed superior motion consistency.

This is significant. We are talking about an open-weight model going toe-to-toe with proprietary tech backed by billions of dollars in compute. For a US-based developer or media company, this means you can potentially host a model in-house that rivals the cloud-based giants, avoiding data privacy issues and API costs.

Expert Analysis: The Implications for Creators

As someone who has analyzed the SEO and content landscape for 15 years, the release of Kandinsky 5.0 signals a pivot point for content production. Here is why:

1. The Death of the Stock Footage Cost Center

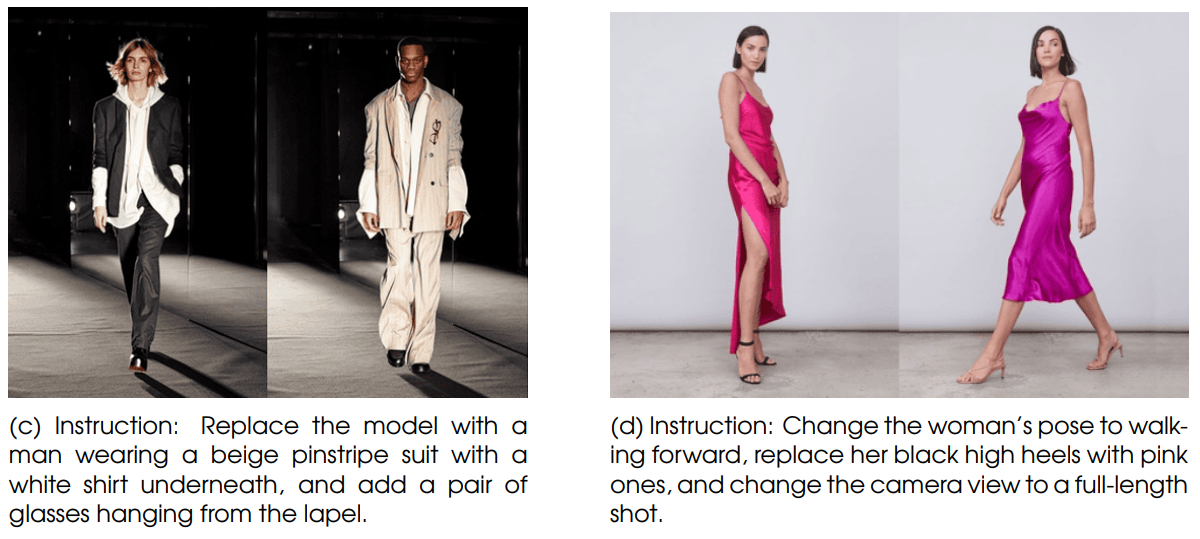

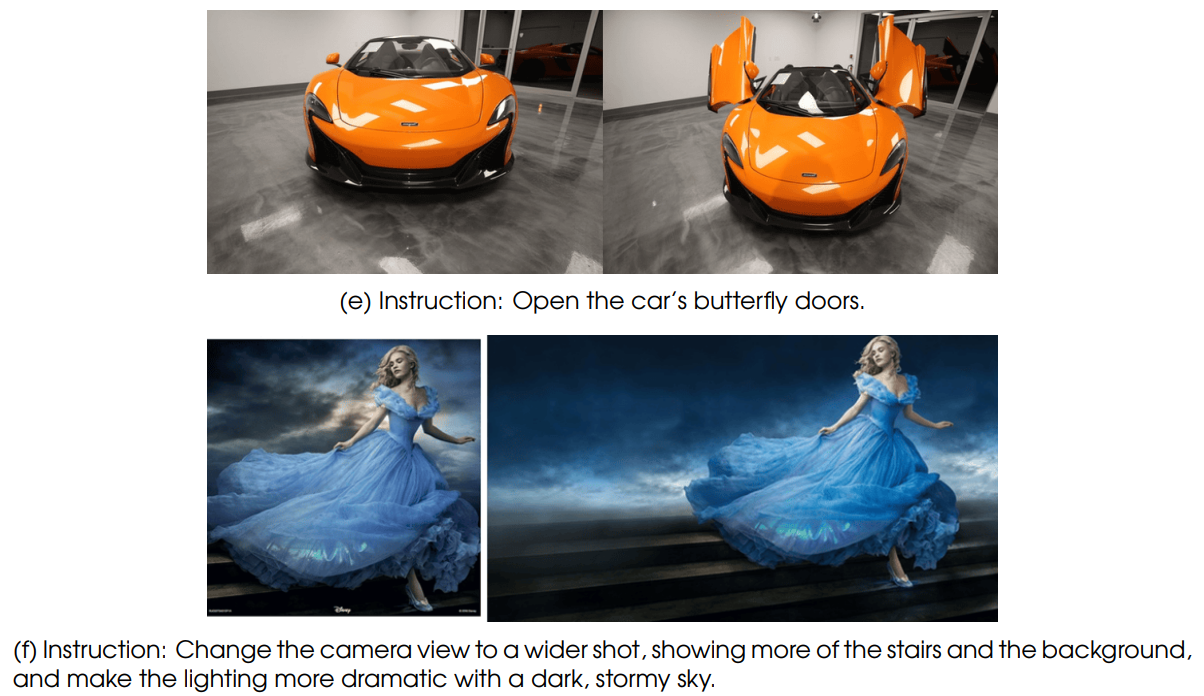

With the Video Lite model being efficient enough for consumer GPUs, and the Pro model delivering broadcast quality, the barrier to creating custom B-roll is vanishing. You no longer need to pay for a generic Getty Images clip; you can generate the exact 10-second shot of “a cybernetic coffee cup exploding into feathers” (yes, the model can actually do that) for pennies in electricity.

2. The Rise of “Flash” Distillation

The report highlights a “Flash” version of their video models. By using Adversarial Diffusion Distillation, they cut the inference steps from 100 down to roughly 16. This is the difference between waiting minutes for a video and waiting seconds. For interactive applications—think video games or real-time educational tools—this speed is non-negotiable.

3. Ethical Guardrails (or Lack Thereof?)

The team has released the code under the MIT license, which is incredibly permissive. While they have done rigorous data filtering to remove watermarks and low-quality data, the open nature means the “safety filter” responsibility shifts to the deployer. In the US, where deepfake legislation is heating up, companies deploying Kandinsky 5.0 will need to implement their own moderation layers. The model doesn’t come with a built-in “nanny filter,” which is a double-edged sword—great for artistic freedom, risky for liability.

“The 10-second barrier has always been the ‘uncanny valley’ of video AI. Anything longer than 4 seconds usually turned into a morphing nightmare. Kandinsky 5.0’s architecture seems to have solved the temporal consistency issue for longer clips.”

Future Outlook: What’s Next?

So, where do we go from here? The Kandinsky 5.0 report hints at a future where the lines between image and video models completely dissolve. We are moving toward “multimedia foundation models”—single architectures that handle text, image, video, and likely audio (though Kandinsky 5.0 focuses on the visual).

For the immediate future, expect the Hugging Face community to rip this model apart—in a good way. We will likely see quantized versions that run on even lower specs, LoRAs (Low-Rank Adaptations) that teach the model specific styles (like 1950s anime or corporate Memphis art), and integrations into tools like ComfyUI within weeks.

The bottom line? If you were holding your breath for Sora, you can exhale. Kandinsky 5.0 isn’t just a placeholder; for many use cases, it’s the solution we have been waiting for. It’s messy, it’s complex, and it requires some hardware—but it’s yours to control. And in the world of AI, ownership is everything.

Supervised Fine-Tuning (SFT) Dataset Curation

Artificial Intelligent

Artificial Intelligent Design & Creative

Design & Creative Freelance

Freelance Gadgets & Gear

Gadgets & Gear Insights

Insights Laptop

Laptop Reviews

Reviews Smartphone

Smartphone Tech Guides

Tech Guides Vibe Coding

Vibe Coding