6 Pivotal Stages of AI Evolution: From Rule-Based Logic to Generative Power

What is the AI Evolution?

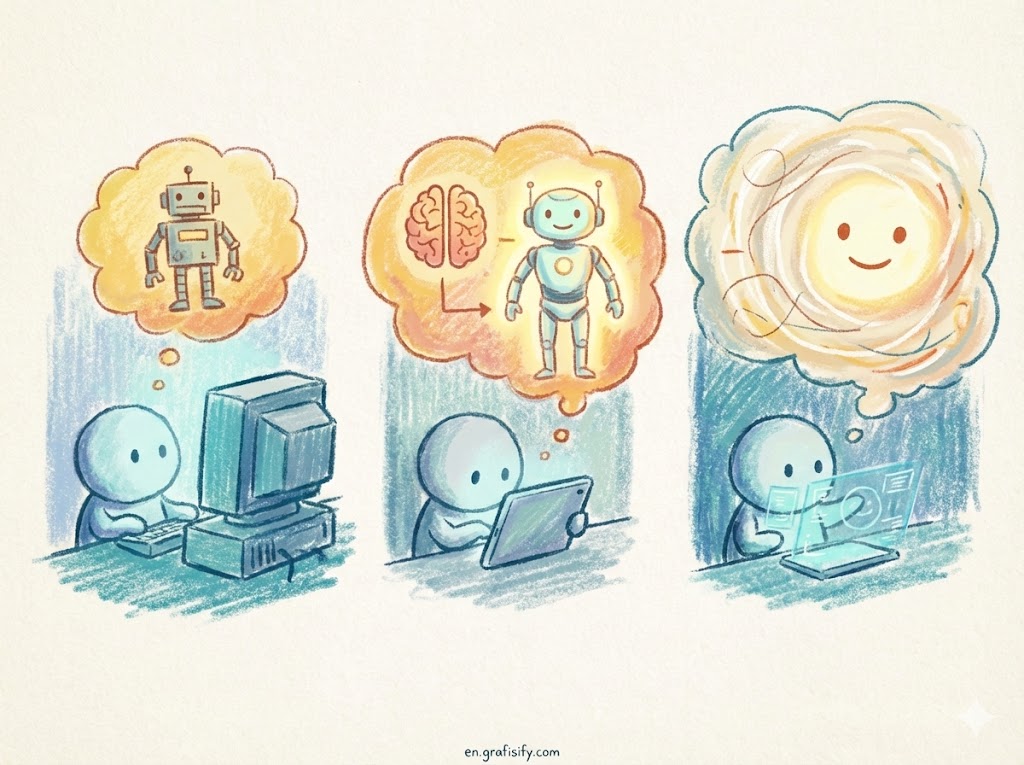

The AI Evolution is the historical timeline of artificial intelligence development, transitioning from “Symbolic AI” (hard-coded rules and logic) to “Connectionist AI” (neural networks and deep learning), culminating in today’s Generative AI. It represents the shift from teaching computers what to think, to teaching them how to learn.

The 6 Eras of AI: How We Got Here

To truly grasp how tools like Gemini and ChatGPT work, we have to look back at the shoulders of the giants they stand on. Here are the 6 definitive eras of the AI Evolution.

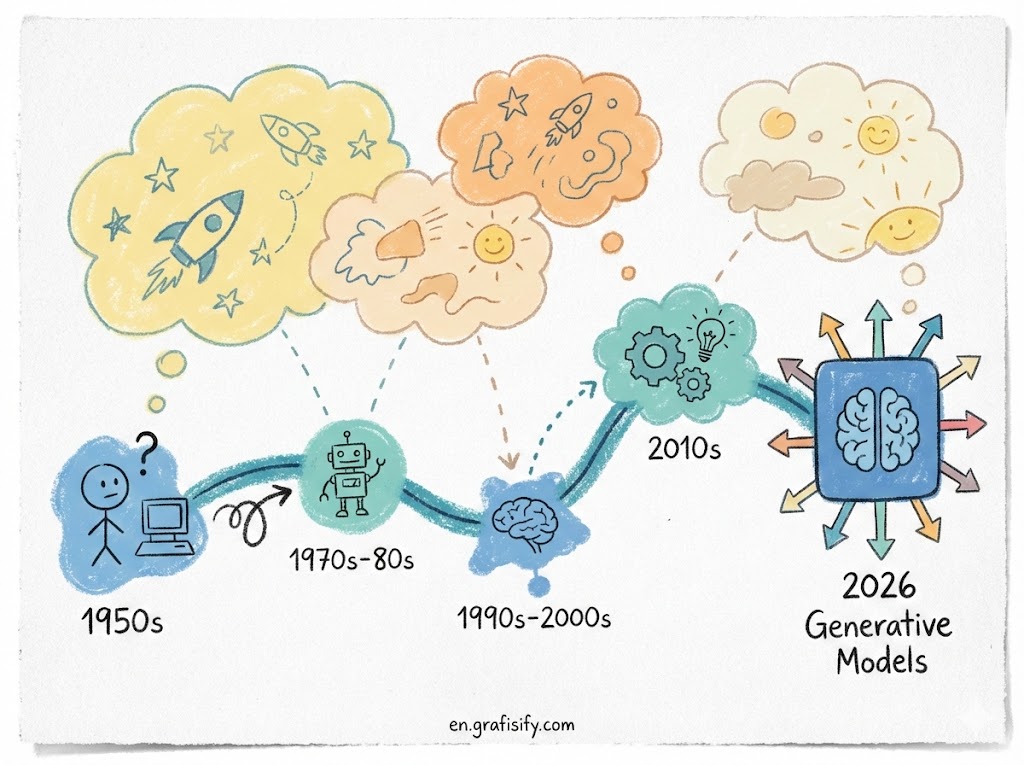

1. The Birth and The Turing Test (1950s-1960s)

Long before we were generating images of cats in space suits, the AI Evolution began with a simple question asked by Alan Turing: “Can machines think?” This era was defined by Symbolic AI, or “GOFAI” (Good Old-Fashioned AI). It was purely theoretical and mathematical. Researchers believed that if you could describe the world in symbols and logic, a machine could replicate intelligence.

During this time, the focus was on proving concepts. The Logic Theorist (1956) is often considered the first AI program, capable of proving mathematical theorems. However, these systems had zero learning capability. They were rigid, brittle, and only worked in highly controlled environments. It was the era of optimism, where pioneers thought human-level intelligence was just a summer project away. For a deeper dive into these foundational concepts, you can check the archives of the Turing Test history on Wikipedia.

2. The Era of Expert Systems (1970s-1980s)

As reality set in, the “AI Winter” cooled things down until the rise of Expert Systems. This is specifically the “Rule-Based System” era. Instead of trying to simulate a human brain, developers focused on simulating a human expert in a narrow field. These systems relied on massive “If-Then” logic chains.

Imagine a digital doctor. If the patient has a fever (Input A) and a cough (Input B), then diagnose the flu (Output C). These systems were useful in industries like chemical analysis and financial planning. However, they couldn’t handle ambiguity. If a situation fell outside their pre-programmed rules, the system crashed or gave nonsense answers. They were smart, but they weren’t intelligent.

3. The Machine Learning Renaissance (1990s-2000s)

This was the turning point in the AI Evolution. We moved away from telling computers exactly what to do (rules) and started feeding them data so they could figure out the rules themselves. This is the advent of Machine Learning (ML). Algorithms like decision trees and Support Vector Machines (SVMs) became the standard.

Suddenly, spam filters became effective because they “learned” what spam looked like based on thousands of examples, rather than a programmer manually listing every scam word in the dictionary. This era coincided with the explosion of the internet, providing the massive datasets required to train these statistical models.

4. Deep Learning and The Neural Network Boom (2010s)

If Machine Learning was the spark, Deep Learning was the gasoline. Thanks to the arrival of powerful GPUs (originally meant for video games), researchers could finally run “Neural Networks” with many layers—hence “Deep” Learning. This mimicked the human brain’s structure much closer than ever before.

This era gave us computer vision that actually worked (tagging friends on Facebook) and voice assistants like Siri. The defining moment was likely in 2012 with AlexNet dominating the ImageNet competition. The system didn’t just match patterns; it extracted features (edges, textures, shapes) hierarchically. This was the muscle that would eventually power the generative bots we know today.

5. The Transformer Architecture Shift (2017)

You cannot discuss modern AI without mentioning the 2017 paper “Attention Is All You Need” by Google researchers. This introduced the “Transformer” architecture. Before this, AI struggled with context in language—it would forget the beginning of a sentence by the time it reached the end.

Transformers changed the game by allowing the model to pay “attention” to different parts of a sentence simultaneously, regardless of distance. This parallel processing capability allowed for the training of massive models on the entire internet’s worth of text. This is the “T” in GPT (Generative Pre-trained Transformer). Without this specific leap in the AI Evolution, current LLMs would not exist.

6. The Generative AI Age (2022-Present)

We have arrived at the current peak. We moved from discriminative AI (which classifies data: “This is a cat”) to Generative AI (which creates data: “Here is a new drawing of a cat”). Models like ChatGPT (OpenAI) and Gemini (Google) are Large Language Models (LLMs) that predict the next token in a sequence with uncanny accuracy.

This stage is characterized by “emergent behavior”—capabilities the models weren’t explicitly trained for but developed anyway, such as coding or translating obscure languages. It is the culmination of the previous five stages: the logic of the 50s, the data of the 90s, the neural nets of the 2010s, and the architecture of 2017. For a technical breakdown of how these models scale, IBM’s overview of Generative AI offers excellent technical insights.

Future-Proofing: How to Adapt to the Change

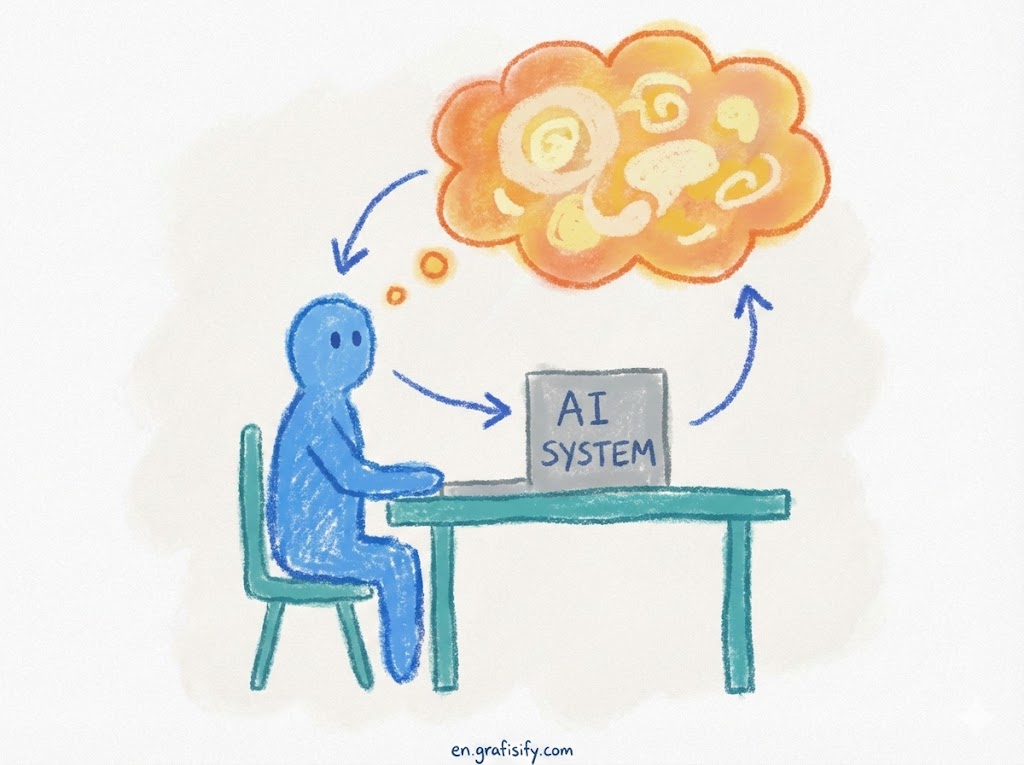

Now that we understand the AI Evolution, the question isn’t “what happened?” but “what now?” The shift from rule-based systems to probabilistic engines requires a change in how we interact with software. It’s no longer about command lines; it’s about prompt engineering.

The most common mistake businesses make today is treating Generative AI like an Expert System (Era 2). They expect 100% rigid accuracy (like a calculator) from a system designed for probabilistic creativity. To succeed, you must adopt a “human-in-the-loop” workflow. Use AI for the heavy lifting of generation, but rely on human expertise for verification and context.

Pros & Cons of This Evolution

✅ The Good

- Democratization of Creativity: Generative AI lowers the barrier to entry for coding, writing, and design.

- Unprecedented Efficiency: Tasks that took weeks (like data analysis or drafting) now take minutes.

- Complex Problem Solving: Modern AI can find patterns in medical data or climate models that humans simply cannot see.

❌ The Bad

- The “Black Box” Problem: Unlike rule-based systems, we often don’t know why a neural network made a specific decision.

- Hallucinations: Generative models can confidently state false facts because they prioritize probability over truth.

- Bias and Ethics: Models trained on internet data inherit the internet’s biases, leading to skewed outputs.

Final Thoughts

The AI Evolution is a testament to human persistence. We went from writing if-then statements on punch cards to conversing with models that can pass the Bar Exam. However, we are still in the early days of the Generative era. The technology is powerful, but it requires guided supervision to be truly effective.

As we look toward Artificial General Intelligence (AGI), the lessons from the rule-based era remind us that structure is still necessary amidst the creative chaos.

Artificial Intelligent

Artificial Intelligent Design & Creative

Design & Creative Freelance

Freelance Gadgets & Gear

Gadgets & Gear Insights

Insights Laptop

Laptop Reviews

Reviews Smartphone

Smartphone Tech Guides

Tech Guides Vibe Coding

Vibe Coding